Will AI Concierge be realized in 2030? The future of KDDI's R & D (Part 1) | TIME & SPACE by KDDI

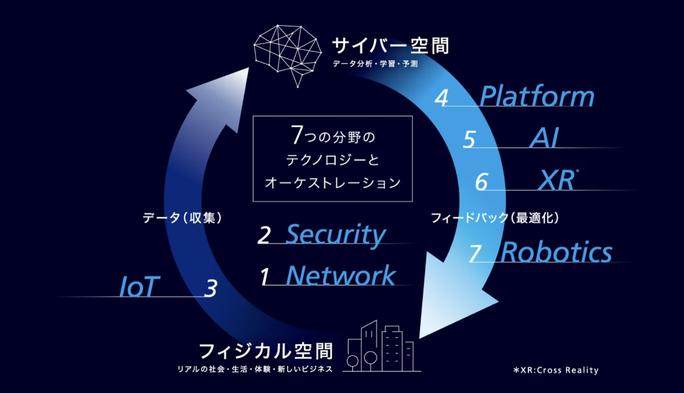

What is the future 10 years from now? In August 2020, KDDI and KDDI R & D Laboratories announced the next-generation social concept "KDDI Accelerate 5.0" with an eye on 2030. We aim to create a new lifestyle by advancing seven research and development projects that highly integrate real space (physical space) and virtual space (cyber space).

The seven R & Ds are "network," "security," "IoT," "platform," "AI," "XR," and "robotics."

What kind of future will it bring about and what kind of changes will it bring to our lives? What are the challenges before it is realized? We interviewed researchers at KDDI R & D Laboratories, who study various fields, using some cases as subjects.

Case 1: AI concierge supports daily life

For example, a concierge with an AI robot. When I wake up in the morning, the AI concierge will support the beginning of the day in a conversational format, such as "Good morning. It's a very nice day today. Would you like to tell us about today's schedule?" It also prepares the best meal for the mood of the day. ――― It's a scene you often see in movies and anime, but will such a future come?

A researcher specializing in fields such as "AI" and "robotics" at KDDI R & D Laboratories replies:

From left, KDDI R & D Laboratories Kenmei Wu (chat dialogue AI), Emiko Mizuguchi (health medicine / diet analysis), Hiroshi Hanano (robotics), Naoto Takeda (human cooperation AI)- 【table of contents】

Three challenges to realize free conversation with AI

Wu: I'm mainly researching conversation AI, a technology that enables smooth dialogue between people and AI. My research dream and ultimate goal is to realize an AI that allows free conversation like Doraemon, and I am aiming for an AI that grows together as a member of the family and sometimes gives various advice. There are three challenges.

KDDI R & D Laboratories Kenmei Wu (Chat Dialogue AI)The first challenge is how AI "understands users." What kind of emotions do the people you are talking to and what do you want? One point is whether it is possible to extract keywords and utterance meanings from words and search for hobbies and tastes through dialogue.

The second issue is how to achieve a natural conversation. This is the most difficult, but it is said that the technique of returning appropriate words according to the emotions and context of the conversation with each other like human beings is more than 100 times more difficult than image analysis and voice analysis. At the moment, it is becoming a field that only large companies such as Google and Meta are working on.

For example, in Japanese, it can be difficult to judge whether the word "OK" alone means "YES" or "NO". In American research, words occupy 30% of the elements that read emotions from human communication, and most of the judgments are emotions, such as facial expressions, eye movements, and gestures. It is said that it occupies an important proportion in understanding. The reason why it is said to be difficult research is that the amount of information used as a criterion is quite large.

And the third issue is "advice". Even now, passive conversations that answer "tell me" from here, such as those realized by Siri and Google Assistant, can be realized, but it encourages the decision making and motivation of the person with whom you are talking. Whether you can say words, that is, words that give advice. This is also a major issue in dialogue AI research. It's difficult for a real person to give advice that suits him. How to let AI learn this.

How to clear these three issues is the key to realizing smooth conversations between people and AI.

Feed back to AI and grow

Takeda: Even in my research field, "how AI works actively" is a big issue. As Mr. Wu mentioned, even with today's smart speakers, it is possible to passively control devices that respond to user questions such as "turn on electricity" and "play music." it's finished. However, some people may feel that this passive service is not much different from turning it on by themselves.

KDDI R & D Laboratories Naoto Takeda (Human Cooperation AI)It would be very convenient if AI actively acted "I thought you were ◯◯, so I did ◯◯ first". However, this is a very difficult problem to achieve. Because even humans may get angry when they clean a room for good, for example, "Why do you clean it yourself!", So what is desirable for one person is for another. Since there are cases where this is not the case, it is difficult to always take the best action even in AI, and it can be regarded as a mistake.

In order to resolve this issue, it is essential to provide feedback from each user and explain to the user why he / she made a mistake. We will create a mechanism for AI to become smarter and smarter by giving feedback on what we really wanted to do so that when AI makes a mistake, we will not lose trust that "this AI can no longer be used". When this framework of "AI grows by human feedback while ensuring the trust of humans" is completed, I think that a good AI concierge that suits each person will be born.

Dialogue with AI and build relationships of trust

Mizuguchi: Building a relationship of trust with AI is an important point of view. In the field of data health, I am conducting research on health support from the consumer's perspective, especially by analyzing videos during meals, AI giving advice on how to eat and nutrition, and supporting the acquisition of appropriate eating habits. I am doing this in collaboration with a medical institution. For example, when I analyzed the image of the shaved ice of mango when using the existing meal image analysis AI service, the AI mistakenly determined that it was "Katsudon 500kcal." had. As Mr. Takeda says, if a user makes a judgment that is far from the correct answer when actually using it, he or she may think "Oh, that's not the case" or "This AI is useless" and stop using the service. Maybe.

KDDI R & D Laboratories Emiko Mizuguchi (Health and Meal Analysis)Of course, while it is necessary to improve the accuracy of image analysis as a technique, it is a habit to repeatedly teach AI, "This is not a katsudon but a mango shaved ice." My goal is to create an environment where AI can build a relationship of trust with such people, and if we use communication to improve the accuracy of AI, we will realize AI that will make proposals that suit us. thinking about.

In addition, there is a limit to what cannot be seen by the human eye, such as the amount of salt dissolved in the diet, by image analysis alone. Therefore, we supplement the information to give advice that is more suitable for the user, such as asking the user what kind of seasoning and how much they used from AI, registering information on frequently used seasonings in advance, etc. We are also working on the development of technology to make it correct.

――― It will be necessary in the future to teach AI to children who do not know anything yet, or to grow together like a family, rather than just treating it as a home appliance or robot.

Mizuguchi: Yes, as such relationships progress, more detailed advice, for example, instead of a uniform judgment of ◯ ✕ that "I'm eating too much calories." It's okay. From tomorrow, you'll be on a business trip? Be careful not to take too much salt when eating out. "

Challenges for the spread of robots from the perspective of robotics research

――― When AI evolves in this way, are there any issues in the field of robotics?

Hanano: That's right. My research field is robotics, and I envision a future in which many robots will exist in the city and at home in 2030, and I am doing research to support how efficiently we can control such many robots.

KDDI R & D Laboratories Hiroshi Hanano (Robotics)When considering a world in which robots have permeated such a society, the biggest issue now for widespread use is the cost of robots. Currently, it is very expensive because we are trying to install all the necessary functions and parts on the robot itself, such as the development of AI from the hardware that becomes the robot's vessel, and the high-performance PC to operate the AI. ..

Among them, for example, if the AI part can be moved to the cloud, it will not be necessary to install a high-performance PC, and if the AI can be jointly developed and used, robots as hardware can be made cheaply, and the spread hurdle will be lowered. That's why. The field of robots covers a wide range of topics, including hardware, communication, and security, so cost is not the only issue for widespread use, but we have prepared one platform for each and have a high cost performance environment. Beyond my research on creating robots, I believe that there is a future in which robots will become widespread.

What is the future ahead of research?

――― What kind of future is waiting for you as your current research progresses?

Wu: Dialogue AI aims to realize free conversation between people and AI. An existence that understands users and gives various advices for life support and growth. For that purpose, it is necessary to analyze not only the words but also the expressions of the body that disappear in an instant such as facial expressions and gestures, but I would like to make full use of vital sensors and facial expression recognition.

Takeda: In my research, I envision a future in which many home appliances and furniture are interconnected and AI actively controls them. Even if you look at the weather forecast tomorrow before going to bed and don't bother to take the action of "it seems to be cold in the morning, let's set a timer", AI says "This person is about to wake up. Today the temperature is low, so start the air conditioner." "Let's keep it", prepare plates and chopsticks when you start cooking, and self-declare "this is broken" if there is a broken home appliance. Since it is difficult for AI to control the user's wishes from the beginning, explain the reason why AI came to control it in case of a mistake, get the user's conviction, and draw out more specific feedback and accumulate. By establishing the technology to continue, we would like to move forward toward the realization of such a future.

Mizuguchi: In a world where AI and robots have become widespread, we believe that the life expectancy of humans will increase and the era of 100 years of life will become commonplace, and that healthy life expectancy will attract more attention than life expectancy. At that time, how can we support lifestyle-related habits that do not get sick? In addition to genetic analysis, this person is more vulnerable to alcohol than other families, and AI captures the nature of the person and leads a life that can live longer, and even if he gets sick, he refrains from salt. AI will propose and support meals that correspond to symptoms and physical conditions such as less burden on the body. I would like to support the extension of such healthy life expectancy.

Hanano: I think the goal is a smart city. As the life expectancy is extended and the birth rate is low, there will be a labor shortage in 2030, when there will be fewer people in the prime of work, and the life that is now convenient may become inconvenient in the future. I think there is. One of the solutions is a robot that supports labor-intensive services. I think that many people still apply online for delivery of heavy items such as water and orders for daily necessities, but when the time comes when robots deliver instead of human delivery staff, diligent orders will be made without hesitation. You will be able to do it, the idea of hoarding will disappear, and you may not need the space to keep things at home in the first place. Maybe I don't even need a kitchen. In that case, the space can be utilized and the cost performance of life will improve. We would like to improve the environment that supports the back side when such robots realize convenient services that can replace humans.

--- When you think of the future 10 years from now, it's easy to imagine a visual future such as VR and AR, but AI and robots will enrich our lives now, and we will set foot on the ground. I saw the rich future that was there.

Hanano: We at KDDI R & D Laboratories attach great importance to "consumers." We will create the future together while listening to the current issues as well as the glittering future. I would like to continue working on such research in the future.

- want to know more

- What is KDDI Accelerate 5.0?

![lifehacker lifehacker LifeHacker LifeHacker A carabiner that is convenient for cutting packaging at the entrance. Excellent sharpness for medical blades! [Today's life hack tool] lifehacker lifehacker LifeHacker LifeHacker A carabiner that is convenient for cutting packaging at the entrance. Excellent sharpness for medical blades! [Today's life hack tool]](http://website-google-hk.oss-cn-hongkong.aliyuncs.com/drawing/223/2022-3-2/28016.jpeg)

![lifehacker lifehacker LifeHacker LifeHacker [2021] 7 Recommended Dishwashers | Introducing High Cospa & Compact Products lifehacker lifehacker LifeHacker LifeHacker [2021] 7 Recommended Dishwashers | Introducing High Cospa & Compact Products](http://website-google-hk.oss-cn-hongkong.aliyuncs.com/drawing/223/2022-3-2/30293.jpeg)

Will AI Concierge be realized in 2030? The future of KDDI's R & D (Part 1) | TIME & SPACE by KDDI

lifehacker lifehacker LifeHacker LifeHacker A carabiner that is convenient for cutting packaging at the entrance. Excellent sharpness for medical blades! [Today's life hack tool]

[2021] 11 latest recommendations for microwave ovens and ovens! Thorough explanation of how to choose

How to delete all Gmail unnecessary emails | @Dime at Dime